1

New and improved - AssemblyAI Q3 recap

Check out our quarterly wrap-up for a summary of the new features and integrations we launched this quarter, as well as improvements we made to existing models and functionality.

Claude 3 in LeMUR

We added support for Claude 3 in LeMUR, allowing users to prompt the following LLMs in relation to their transcripts:

- Claude 3.5 Sonnet

- Claude 3 Opus

- Claude 3 Sonnet

- Claude 3 Haiku

Check out our related blog post to learn more.

Automatic Language Detection

We made significant improvements to our Automatic Language Detection (ALD) Model, supporting 10 new languages for a total of 17, with best in-class accuracy in 15 of those 17 languages. We also added a customizable confidence threshold for ALD.

Learn more about these improvements in our announcement post.

We released the AssemblyAI Ruby SDK and the AssemblyAI C# SDK, allowing Ruby and C# developers to easily add SpeechAI to their applications with AssemblyAI. The SDKs let developers use our asynchronous Speech-to-Text and Audio Intelligence models, as well as LeMUR through a simple interface.

Learn more in our Ruby SDK announcement post and our C# SDK announcement post.

This quarter, we shipped two new integrations:

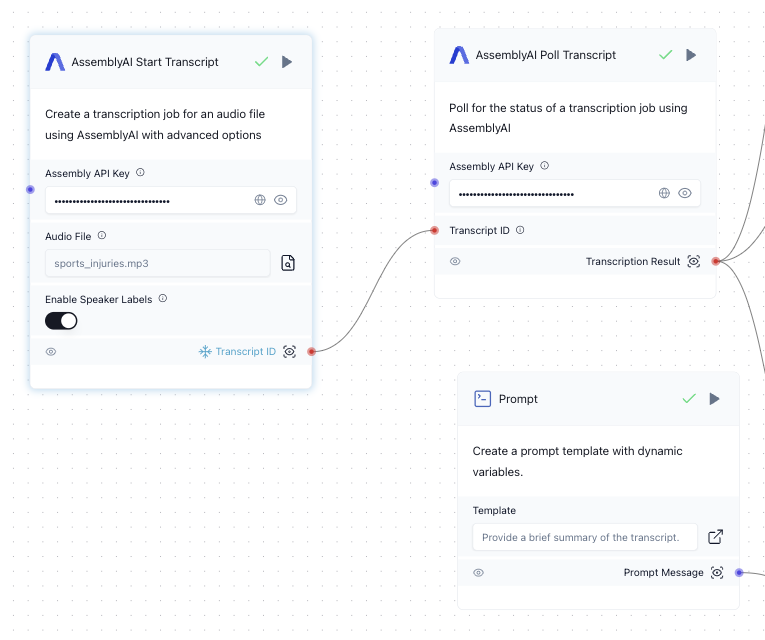

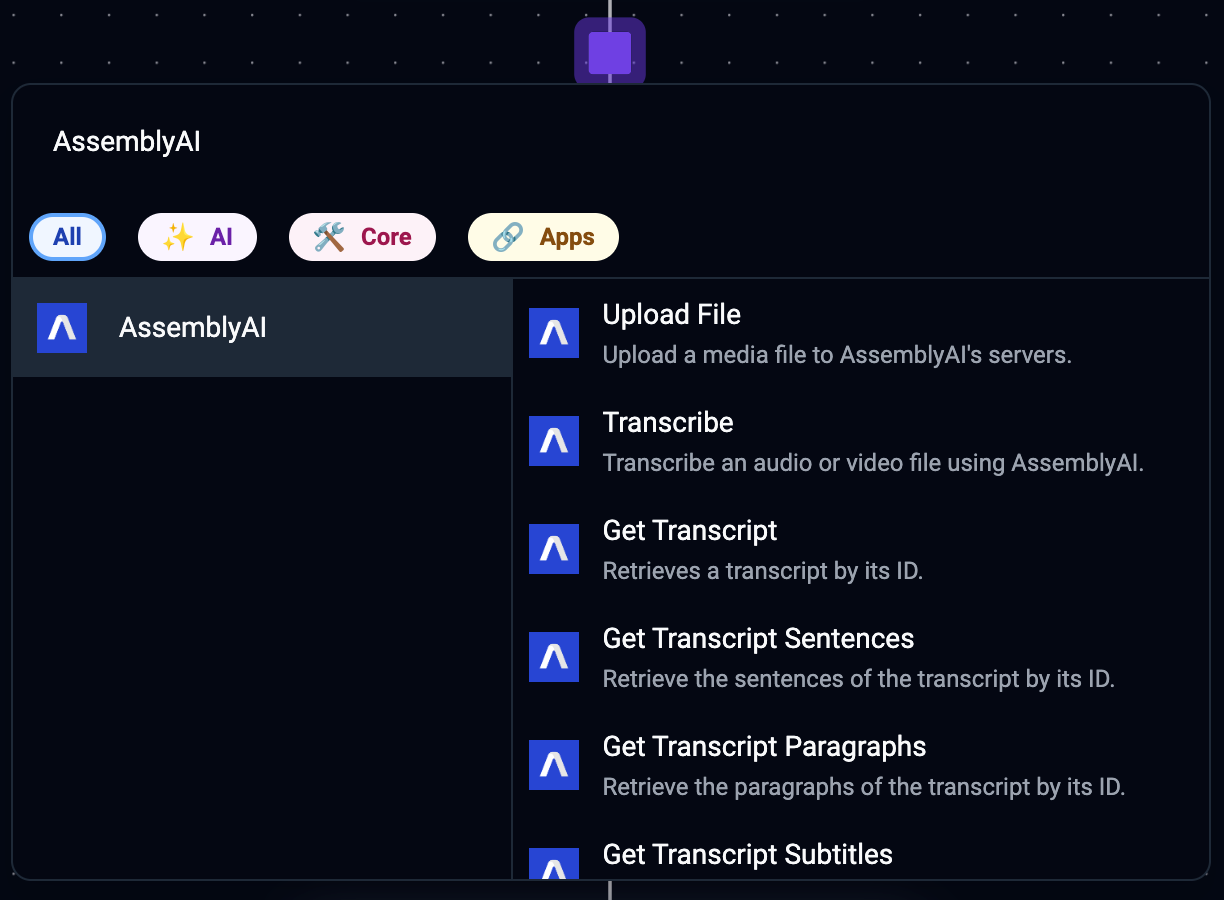

Activepieces 🤝 AssemblyAI

The AssemblyAI integration for Activepieces allows no-code and low-code builders to incorporate AssemblyAI's powerful SpeechAI in Activepieces automations. Learn how to use AssemblyAI in Activepieces in our Docs.

Langflow 🤝 AssemblyAI

We've released the AssemblyAI integration for Langflow, allowing users to build with AssemblyAI in Langflow - a popular open-source, low-code app builder for RAG and multi-agent AI applications. Check out the Langflow docs to learn how to use AssemblyAI in Langflow.

Assembly Required

This quarter we launched Assembly Required - a series of candid conversations with AI founders sharing insights, learnings, and the highs and lows of building a company.

Click here to check out the first conversation in the series, between Edo Liberty, founder and CEO of Pinecone, and Dylan Fox, founder and CEO of AssemblyAI.

We released the AssemblyAI API Postman Collection, which provides a convenient way for Postman users to try our API, featuring endpoints for Speech-to-Text, Audio Intelligence, LeMUR, and Streaming for you to use. Similar to our API reference, the Postman collection also provides example responses so you can quickly browse endpoint results.

Free offer improvements

This quarter, we improved our free offer with:

- $50 in free credits upon signing up

- Access to usage dashboard, billing rates, and concurrency limit information

- Transfer of unused free credits to account balance upon upgrading to Pay as you go

We released 36 new blogs this quarter, from tutorials to projects to technical deep dives. Here are some of the blogs we released this quarter:

- Build an AI-powered video conferencing app with Next.js and Stream

- Decoding Strategies: How LLMs Choose The Next Word

- Florence-2: How it works and how to use it

- Speaker diarization vs speaker recognition - what's the difference?

- Analyze Audio from Zoom Calls with AssemblyAI and Node.js

We also released 10 new YouTube videos, demonstrating how to build SpeechAI applications and more, including:

- Best AI Tools and Helpers Apps for Software Developers in 2024

- Build a Chatbot with Claude 3.5 Sonnet and Audio Data

- How to build an AI Voice Translator

- Real-Time Medical Transcription Analysis Using AI - Python Tutorial

We also made improvements to a range of other features, including:

- Timestamps accuracy, with 86% of timestamps accuracy to within 0.1s and 96% of timestamps accurate to within 0.2s

- Enhancements to the AssemblyAI app for Zapier, supporting 5 new events. Check out our tutorial on generating subtitles with Zapier to see it in action.

- Various upgrades to our API, including more improved error messaging and scaling improvements to improve p90 latency

- Improvements to billing, now alerting users upon auto-refill failures

- Speaker Diarization improvements, especially robustness in distinguishing speakers with similar voices

- A range of new and improved Docs

And more!

We can't wait for you to see what we have in store to close out the year 🚀